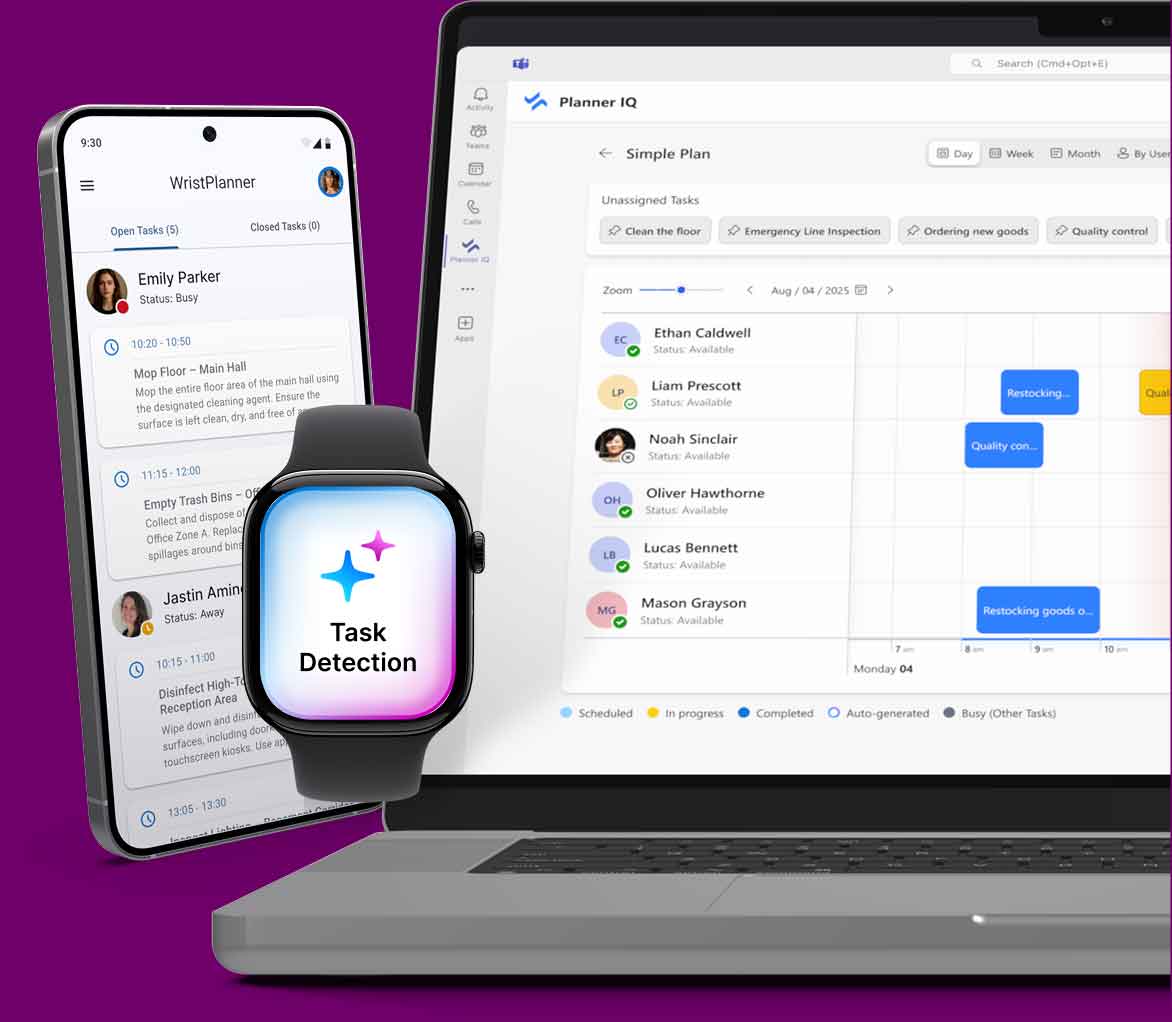

Workers complete tasks. Your smartwatch sees it. The system knows it. Everything updates in real-time.

Motion IQ uses advanced AI to recognize work through movement, location, and time—not clicks or inputs. Tasks close automatically. Data flows instantly. Workers stay focused. Operations stay synchronized.

Motion IQ watches three things simultaneously: how workers move, where they are, and how long they work. From these three signals, it recognizes when a task is complete.

The smartwatch sensors detect every motion—how fast, in what direction, with what intensity. Picking up a box looks different from writing. Walking looks different from standing. The system learns thousands of motion patterns.

Location (zone-based tracking), time of day, and work schedule provide crucial context. The same motion pattern means different things at different times and places. Lifting in a warehouse has different implications than lifting in an office.

How long an activity lasts and how often it repeats helps the model distinguish between a momentary action and a completed task. The system understands task boundaries—when work starts and when it's actually finished.

Motion is converted into patterns.

Raw sensor data becomes recognizable features—the "signature" of a task.

Patterns are matched against known tasks.

The AI compares the current motion pattern against all learned task signatures and scores how confident it is.

Context confirms or adjusts confidence.

If the location, time, and worker schedule match, confidence goes up. If they don't match, confidence is recalculated

The system immediately reports it.

When confidence is high enough and lasts long enough, the task completes.

Raw sensor data becomes recognizable features—the "signature" of a task.

Unlike systems that require buttons, barcodes, or manual entry, Motion IQ recognizes work by watching what workers actually do.

Unlike simple step counters, it understands context—the same motion means different things in different situations.

The system improves over time. Each task completion teaches the model. New variations are learned. Worker patterns are understood. Accuracy compounds.

Motion IQ is not designed to monitor or score individual performance. It does not track breaks, personal behavior, or movement outside of work contexts. The system focuses entirely on eliminating manual work and improving operational flow.

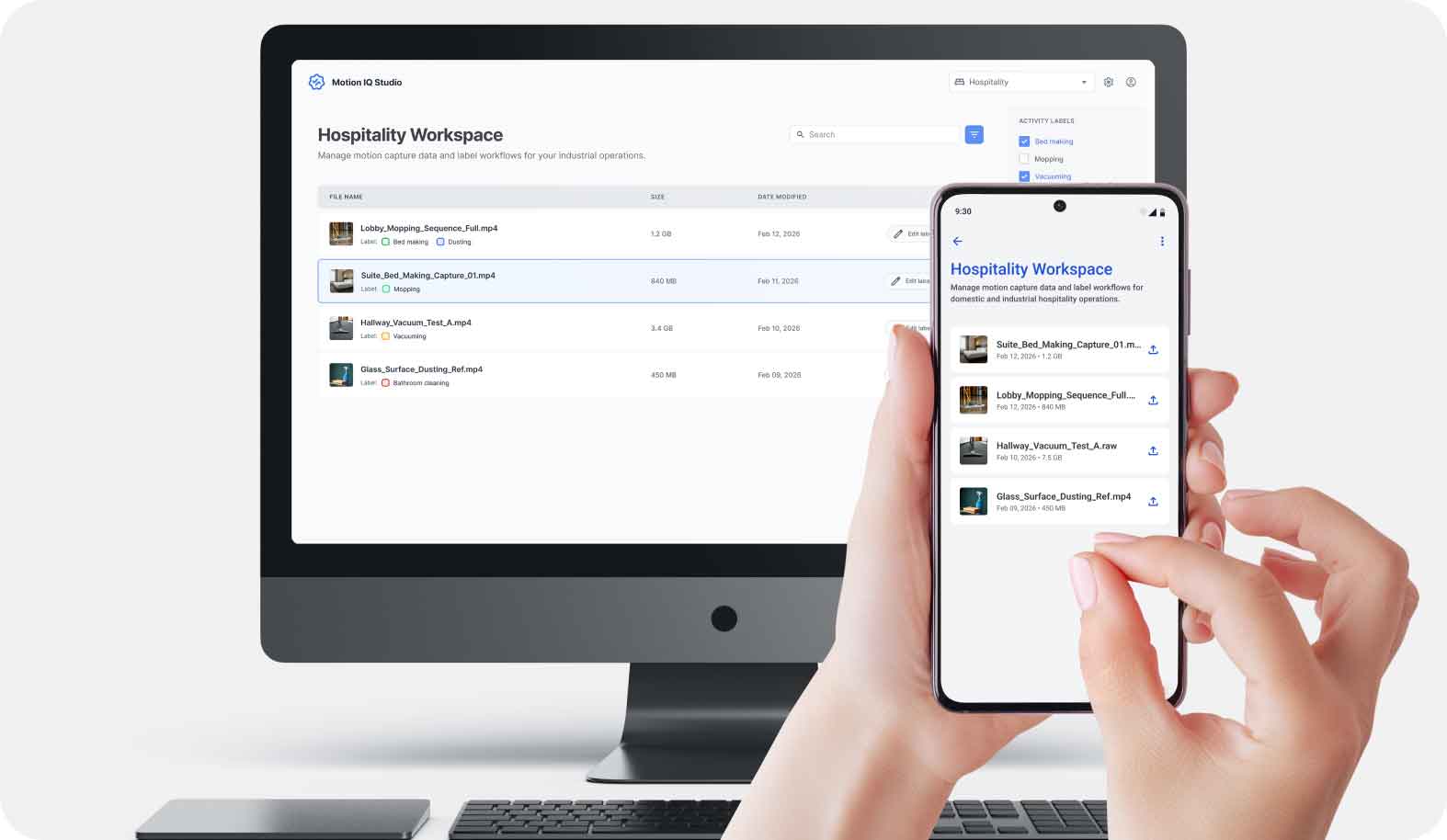

Motion IQ comes pre-trained to recognize hundreds of common work activities across industries. But every business has unique tasks, workflows, and motion patterns specific to their operations. Motion IQ Studio is the tool that lets your organization teach the AI to recognize your custom tasks with high accuracy.

A worker wears a smartwatch running the Motion IQ app on their active working hand (right wrist for right-handed workers, left wrist for left-handed workers). Simultaneously, a manager or supervisor uses the Motion IQ mobile app (Android) with a phone camera to record the worker performing their tasks.

The two data streams—smartwatch IMU sensors and phone video—capture the same work session from different perspectives. The smartwatch captures precise motion telemetry; the video captures what the work actually looks like.

When the work session ends, the system automatically synchronizes the motion sensor data from the smartwatch with the video recording. Timestamps and motion signatures align the two streams, creating a unified record of work motion paired with visual context.

This synchronized data is uploaded to the Motion IQ cloud platform.

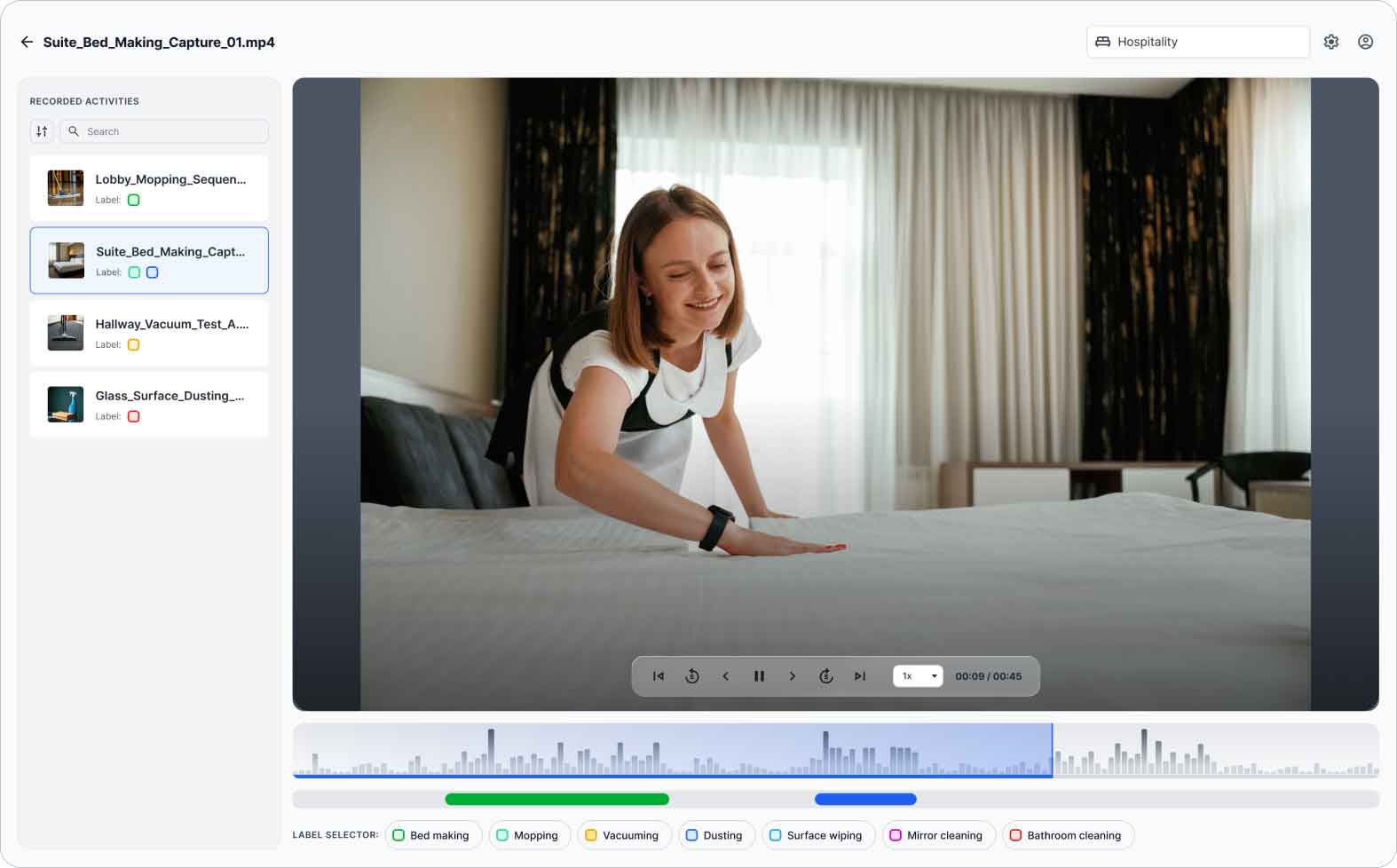

Your team logs into Motion IQ Studio (web application) and reviews the synchronized video + motion data. The key step: your team labels specific segments of the video with the task names and identifiers used in your operations system.

Critical: When labeling, your team marks only the active work periods. They explicitly skip or exclude downtime, breaks, waiting, and idle periods. This ensures the AI learns the motion signature of actual work, not interruptions or pauses.

Once labeled, the segment is locked. The AI now has a clean training sample: this motion signature = this task.

Video + sensor fusion gives the AI perfect training context. Workers don't have to label anything themselves—supervisors do it asynchronously, off the clock.

You define what tasks matter to your business. Motion IQ learns your taxonomy, not a generic one.

The video is used only for AI training, not for monitoring or performance evaluation. Once labeled and trained, videos can be deleted. Only the motion model is retained.

One labeled session can improve recognition for all workers doing similar tasks. As you label more variety, the system becomes more robust.

Eliminate manual task logging, reduce admin time by 70%, and get real-time visibility across your operations — without disrupting your teams.

Experience AI-powered task management built for real work — not desks.